Browse

Archive

16

posts

- LinkedIn Said My Report Didn't Matter. Five Weeks Later It Was a Federal Lawsuit Apr 12, 2026

- The Rosetta Stone of AI BS Mar 11, 2026

- ATS Tried to automate hiring, but got automated back Mar 03, 2026

- Learning RAG while benchmarking it Feb 17, 2026

- I Let an AI Interview Me, Then Data-Analyzed My Own Answers Feb 9, 2026

- How I discovered something interesting about ATS... Jan 25, 2026

- Me, Claude vs jsPDF - The Saga Jan 20, 2026

- 2KB to 2GB: Why Embedded Systems Engineers Will Dominate Jan 11, 2026

- All roads lead to Rome, yet my passport is empty. Jan 10, 2026

- Architecture Before Syntax: The Theme-Aware Chart.js Jan 9, 2026

- What Would AI Invent If We Started from Assembly? Jan 8, 2026

- Taming Gemini Costs & Coding with AI Jan 6, 2026

- Building Production SEO in a 29MB Binary Dec 30, 2025

- Why I built this website, its tech stack and approach Dec 30, 2025

- The Scorer Paradox: A Pragmatic Guide to Beating the ATS Dec 11, 2025

- Why I am Skeptical of AGI, but you should use AI Dec 12, 2025

LinkedIn Said My Report Didn't Matter. Five Weeks Later It Was a Federal Lawsuit

On April 7, 2026, two federal class-action lawsuits landed in the Northern District of California. The allegations: LinkedIn had been running hidden JavaScript that scanned users' browsers for over 6,000 installed extensions, fingerprinted their devices, and routed the data to third-party vendors without disclosure.

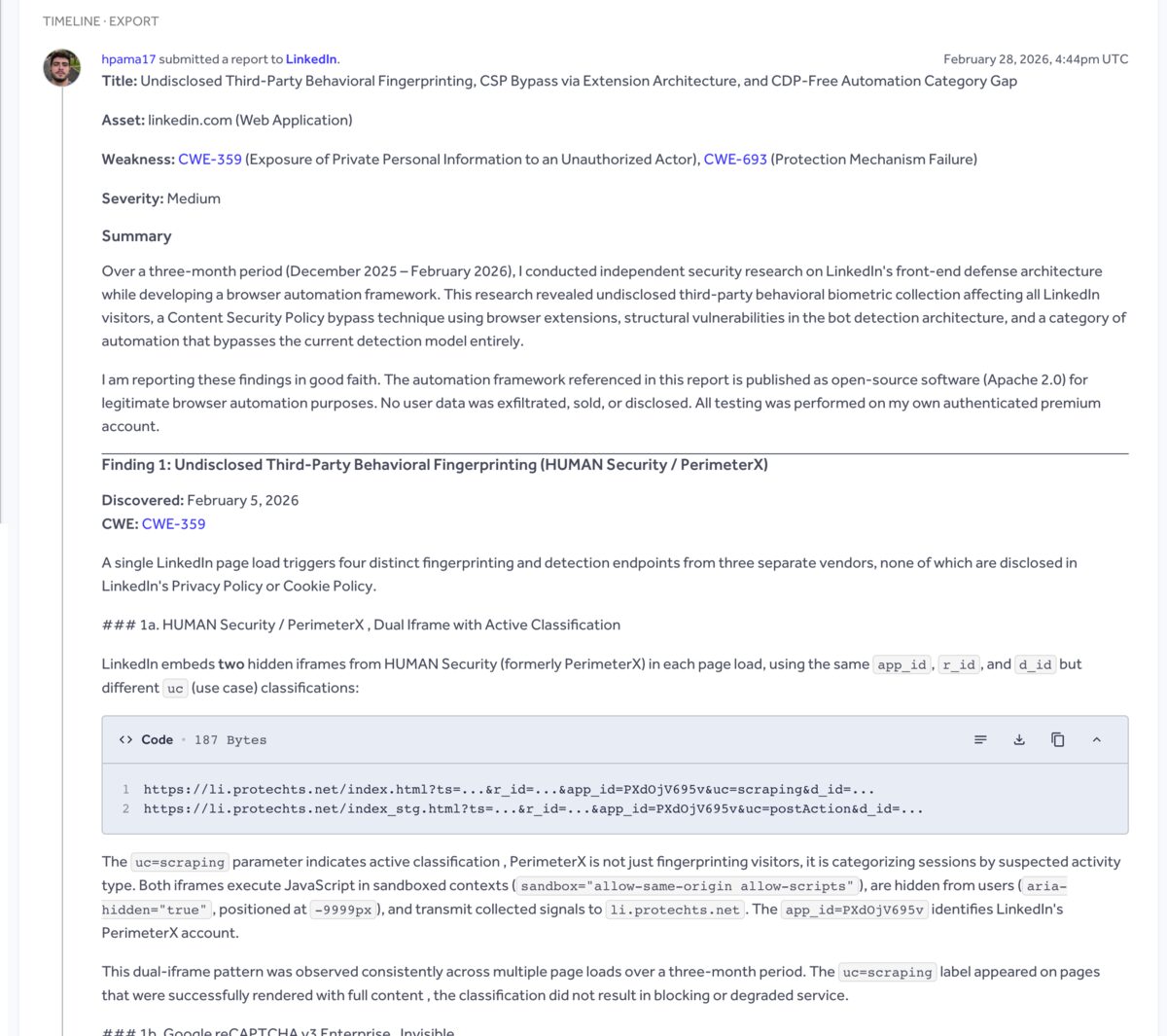

Five weeks earlier, on February 28, I'd submitted a security disclosure to LinkedIn through their HackerOne program documenting the same infrastructure. Seven findings. Undisclosed third-party fingerprinting, a Content Security Policy bypass, behavioral biometric collection without consent, and structural gaps in their bot detection architecture. Three months of research. Hundreds of debug dumps analyzed, 151 of which survived my disk space purges.

LinkedIn's response: "Informative."

I have no connection to the lawsuits. No connection to Fairlinked, the German advocacy group whose reverse engineering triggered the legal action. No connection to the plaintiffs or law firms. I was in Brazil, debugging a job scraper on my own premium account, and I found the same plumbing they found because the plumbing loads on every single page.

Two independent investigations. Same infrastructure. Different continents. The HackerOne disclosure is triaged as private. It was private when I submitted it and it stayed private. Nobody outside LinkedIn's security team could have seen it. My scraper code never went public either. It lived in a private GitHub repository, which, if you want the irony: GitHub is owned by Microsoft, which owns LinkedIn. So the evidence was technically sitting on their own infrastructure the whole time.

I Was Debugging a Scraper

December 16, 2025. First scrape. I'd been learning web development for about a month, coming from mechatronics and robotics. The goal was simple: collect job postings from LinkedIn for a personal matching platform. I wrote about the scraper's architecture in 2KB to 2GB (the Bezier cursor paths, the epsilon-greedy exploration, the whole RTOS-style orchestrator). That scraper ran for three months. Over 8,000 jobs collected.

The security research was an accident.

LinkedIn has some of the most aggressive anti-bot defenses on the internet. My scraper broke constantly. And because you can't diagnose a failure without a baseline, I dumped every error. I also sampled 5% of successful page loads. Not research. Just "when this thing breaks at 2am, I want to know why."

Over three months, those dumps accumulated into a complete map of LinkedIn's front-end defense architecture. PerimeterX iframes. reCAPTCHA integration. A/B testing framework controlling rendering paths. Behavioral tracking pipeline. All of it showed up because all of it loads on every page. I didn't go looking for surveillance. I was trying to figure out why my click handler was returning { clicked: true } while nothing on screen was happening.

Turns out an invisible full-viewport iframe was eating my events. That's Finding 5 in the report. But I'm getting ahead of myself.

Seven Findings, One "Informative"

By late February I had enough documented to write a proper disclosure. I bundled everything into a single structured report (one well-researched report from a new account looks like research; six separate reports look like spam) and submitted through LinkedIn's official HackerOne program.

The report covered:

The actual HackerOne submission. Ticket #3578653, submitted February 28, 2026.

Finding 1: Undisclosed third-party fingerprinting. Every LinkedIn page load triggers four fingerprinting endpoints from three vendors, none disclosed in the privacy policy. Two hidden iframes from HUMAN Security (formerly PerimeterX) pointing to li.protechts.net with LinkedIn's app ID. One of them carries the parameter uc=scraping, which means it's not just fingerprinting visitors. It's classifying sessions by suspected activity type. Google reCAPTCHA v3 Enterprise running invisible in the background. And a first-party fingerprint frame on merchantpool1.linkedin.com. Three vendors, four endpoints. Privacy policy mentions none of them.

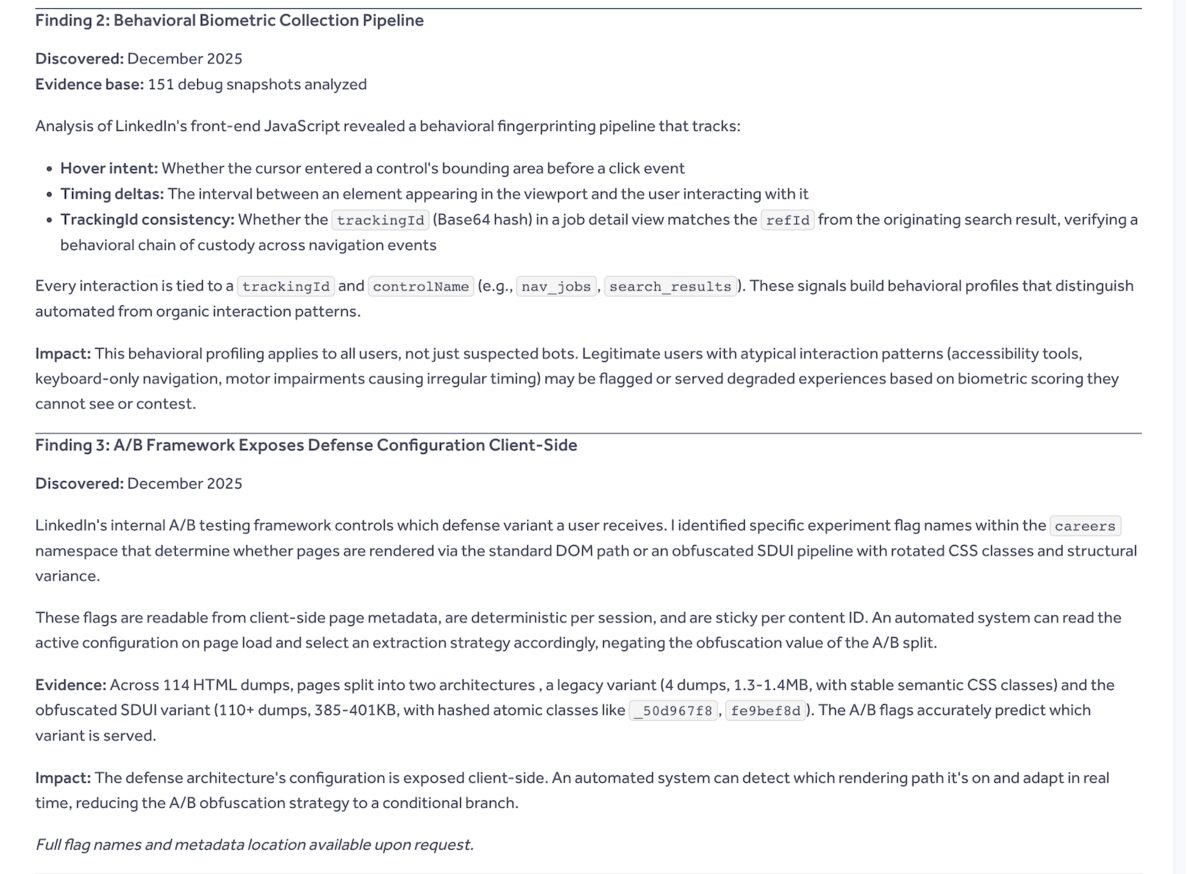

Finding 2: Behavioral biometric collection. 151 debug snapshots analyzed. LinkedIn's front-end JavaScript tracks hover intent (whether your cursor entered an element's bounding area before clicking), timing deltas between elements appearing and users interacting, and cross-navigation tracking ID consistency. Every interaction tied to a trackingId and controlName. This applies to all users, not just suspected bots. Someone using a screen reader or keyboard-only navigation with irregular timing could get flagged by scoring they can't see or contest.

Finding 3: A/B defense configuration exposed client-side. LinkedIn's internal experiment flags determine which rendering path you get. Legacy Ember DOM (stable CSS classes, 1.3-1.4MB pages) or the obfuscated SDUI pipeline (hashed atomic classes like _50d967f8, 385-401KB pages). These flags are readable from page metadata. They're deterministic per session. An automated system reads the active config on load and adapts its extraction strategy. The obfuscation becomes a conditional branch, not a defense. I proved this across 114 HTML dumps. Four used the legacy variant. 110+ used SDUI. The flags predicted which one was served every time.

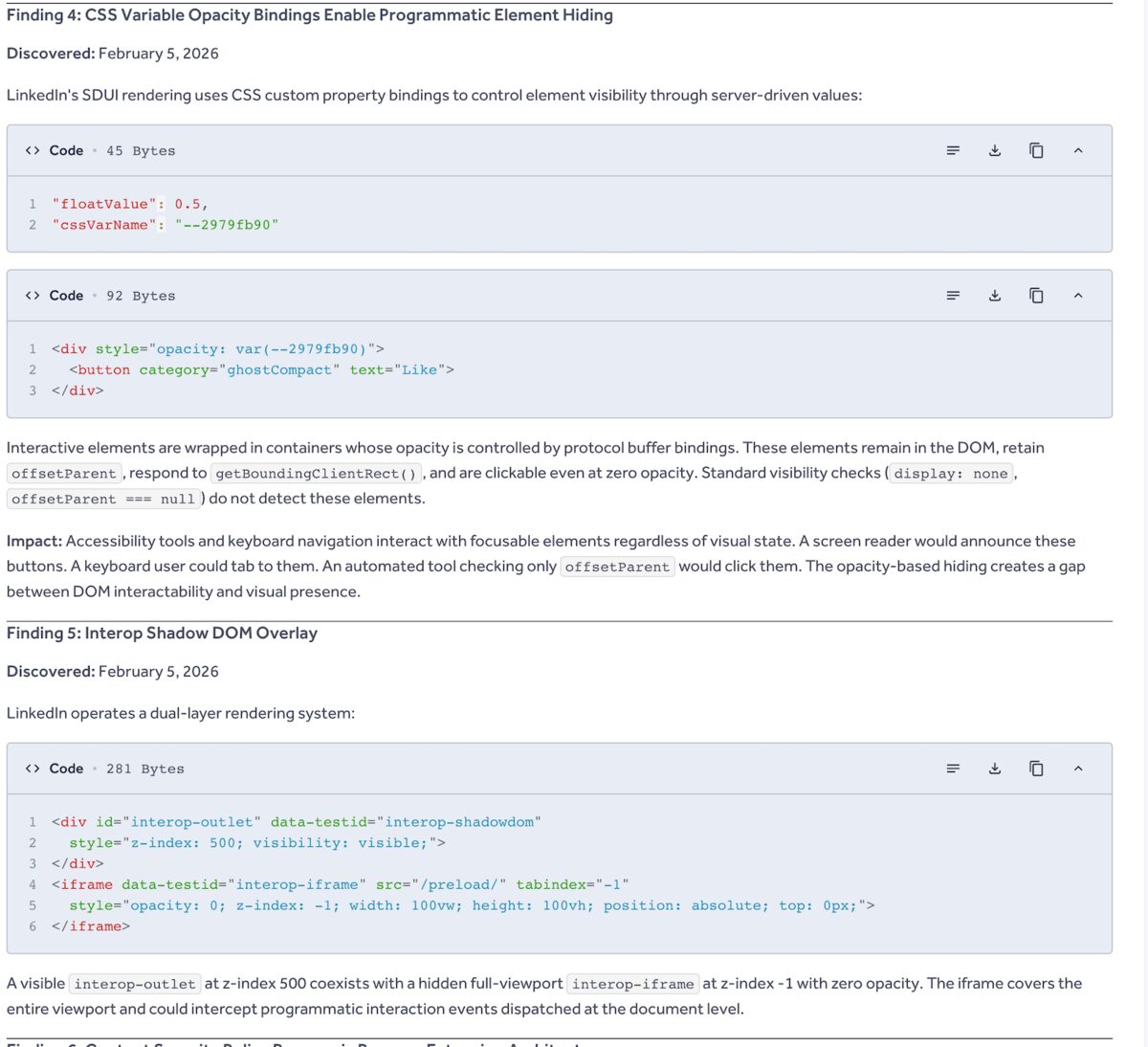

Finding 4: CSS variable opacity traps. LinkedIn's SDUI uses protocol buffer bindings to control element opacity through CSS custom properties. Buttons wrapped in containers whose opacity is server-driven to zero. Invisible, but still in the DOM. Still respond to getBoundingClientRect(). Still clickable. Standard visibility checks don't catch them. A screen reader would announce these buttons. A keyboard user could tab to them. An automated tool checking only standard properties would click an invisible element and expose itself. I spent hours before I found this one. The elements looked real. They measured real. They just weren't visible.

Finding 5: Interop shadow DOM overlay. A full-viewport invisible iframe (opacity: 0, z-index: -1, covering 100vw x 100vh) coexisting with a visible interop-outlet at z-index: 500. This is the one that was eating my click events. Programmatic clicks dispatched at document level were being intercepted by the iframe. Hours of debugging for a result that made zero sense until I found the overlay.

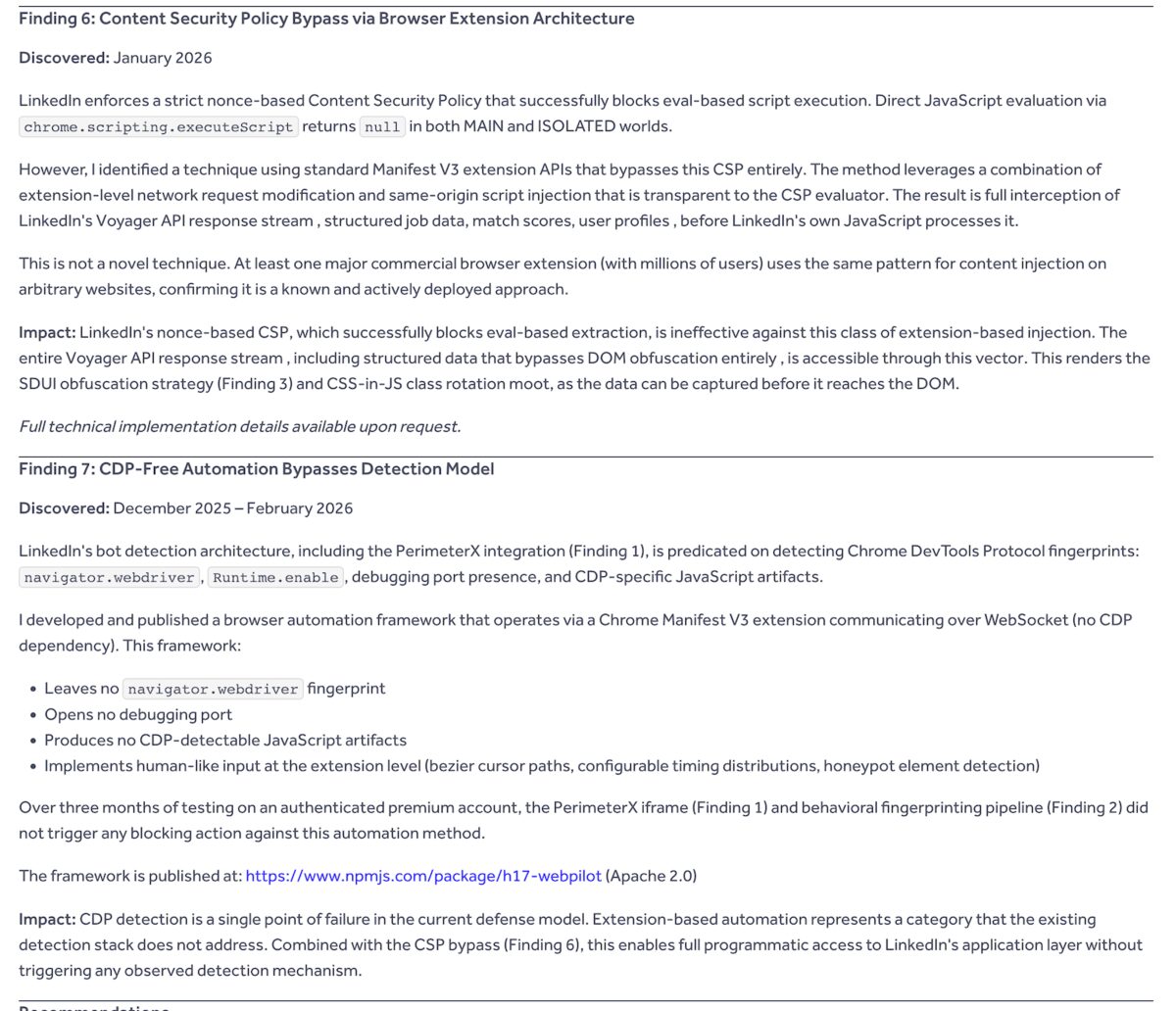

Finding 6: CSP bypass via browser extensions. LinkedIn's nonce-based Content Security Policy blocks eval-based script injection. Successfully. But I found a technique using standard Manifest V3 extension APIs that bypasses it entirely. Extension-level network request modification combined with same-origin script injection is transparent to the CSP evaluator. Full interception of LinkedIn's Voyager API response stream before their own JavaScript processes it. Structured job data, match scores, user profiles. All accessible before it reaches the DOM. The SDUI obfuscation from Finding 3 becomes irrelevant because you're reading the data before it gets rendered.

I didn't invent this. At least one major commercial extension with millions of users (NordVPN) uses the same pattern to inject scripts into arbitrary websites. I just documented that it works against LinkedIn's defenses specifically.

Finding 7: CDP-free automation bypasses the detection model entirely. LinkedIn's bot detection is predicated on Chrome DevTools Protocol fingerprints. navigator.webdriver, debugging ports, CDP-specific JavaScript artifacts. The automation framework I'd built operates via a Chrome extension communicating over WebSocket. No CDP dependency. No navigator.webdriver fingerprint. No debugging port. Over three months, the PerimeterX iframe and behavioral fingerprinting pipeline didn't trigger a single blocking action against it.

That framework eventually became h17-webpilot. Published open-source. Apache 2.0. Zero site-specific code.

I offered LinkedIn the full scraper code, the exact behavioral datasets I'd used to bypass PerimeterX and reCAPTCHA, and three months of calibration data across three categories: manual browsing, automation-assisted browsing, and the behavioral boundary between them. Ground truth data that could improve their detection accuracy without raising false positive rates on legitimate users.

They didn't want it. Report triaged as "Informative." Closed. No bounty. No follow-up. No questions.

Then the Lawsuits Dropped

Five weeks later. A German advocacy group called Fairlinked had independently reverse-engineered LinkedIn's client-side code. Their evidence pack triggered Ganan v. LinkedIn Corporation and Farrell v. LinkedIn in federal court.

Their findings and mine overlap on the same infrastructure. We went deep in different places.

| Area | My Report (Feb 28) | BrowserGate Lawsuits (Apr 7) |

|---|---|---|

| HUMAN Security / PerimeterX | Dual iframe, app_id, uc=scraping classification | Same. Zero-pixel hidden element, cookie injection |

| Behavioral biometrics | Hover intent, timing deltas, trackingId chain | 48 fingerprinting metrics per session |

| Extension scanning | Not found | 6,167 extension IDs in Webpack chunk 905, module 75023 |

| A/B defense framework | Experiment flags, Ember vs SDUI, 114 dumps analyzed | Not covered in detail |

| CSP bypass via extensions | Full Voyager API interception documented | Not covered |

| Opacity / DOM traps | CSS variable bindings, invisible clickable elements | Not covered |

| Voyager API disparity | Not covered | 0.07 vs 163,000 calls/sec. 2.25M:1 gap |

I missed the extension scanning entirely. That's the core of BrowserGate. Fairlinked found a 2.7MB minified JavaScript file with a hardcoded array of 6,167 Chrome extension IDs. The script probes chrome-extension://<id>/manifest.json and passively scans the DOM for mutations injected by specific extensions. It throttles itself using requestIdleCallback so it only runs when the browser is idle. Invisible to the user. Invisible in the network tab unless you're specifically watching for staggered requests.

The list includes Islamic prayer calculators, ADHD focus aids, screen readers, political bias detectors, and extensions from over 200 competing B2B tools. When you require authenticated sessions tied to real names, scanning for a prayer app means "Hugo Palma has this religious software installed." That's GDPR Article 9 territory. The growth rate is what triggered the legal action: 38 extensions in 2017. 5,459 by December 2025. 6,167 by February 2026. Twelve new targets per day.

I didn't find that because I wasn't looking at the JavaScript bundles. I was looking at the DOM, the iframes, and the behavioral signals. Different entry point, different discoveries.

They didn't find the A/B framework, the CSP bypass, or the opacity traps. I did. Because I was the one whose click events were being eaten by invisible overlays at 2am while trying to make a scraper work.

Why I Submitted the Report

I wasn't chasing a bounty. If I wanted money, I wouldn't have spent three months running a scraper on a premium account that costs more than most bounties pay. I was looking for data discipline.

I'd built a scraper that ran undetected against one of the most heavily defended websites on the internet. For three months. On a premium account. While running ads. That last part was deliberate. In LinkedIn's system, a bot is cheap to ban. A paying customer who also runs ads? That's a friction point. I made myself expensive to ban so I could keep mapping the plumbing without getting ghosted by an automated flag.

When I submitted the report, I made them an offer. Full scraper code. Exact behavioral datasets. The parameters they were trying to profile anyway. Ground truth calibration data from my own usage across three categories. Manual browsing, automation-assisted, and the boundary between them. Data that could help them stop the false positives that hit legitimate users.

Free. All of it.

They didn't want it. Their triage team looked at a report from a solo dev who'd been writing code for a few months and decided it was "Informative." I get it from their perspective. I wasn't reporting a traditional vulnerability. No RCE, no SQL injection, no data breach. I was telling them their behavioral collection isn't disclosed and their defense architecture has structural gaps. That's a policy conversation, not a bug bounty topic.

But the structural gaps are real. CDP detection is a single point of failure. Extension-based automation bypasses it completely. I proved this and handed them the proof. They chose not to engage.

Reading the Jitters

I'm an engineer who was trained on 2KB of RAM. In that world, noise doesn't exist without a cause. You learn to read the machine's jitters because they always tell you something is about to break. I built detectors before I built scrapers. I mapped LinkedIn's plumbing because I refused to click "Apply" like a database operator. I documented their surveillance because the debug dumps made it impossible to ignore.

I wrote about this instinct in the embedded systems piece. A goroutine leak isn't software. It's a leaky actuator. A behavioral fingerprinting pipeline isn't a feature. It's a sensor array pointed at every user on the platform, and nobody told them they were being scanned.

The lawsuits cite the Computer Fraud and Abuse Act and the California Invasion of Privacy Act. CIPA is the dangerous one: $5,000 per violation, no requirement to prove economic harm. A billion users. Six thousand scans per page load.

LinkedIn's defense: the scanning "identifies extensions that scrape data without members' consent." They point to a privacy policy line about "web browser and add-ons." They call BrowserGate "a house of cards built entirely upon a fabrication."

Their own Senior Engineering Manager filed a sworn affidavit in the Munich proceedings admitting the company "invested in extension detection mechanisms" and that their systems "may have taken action against LinkedIn users that happen to have [certain extensions] installed."

Hard to call something a fabrication when your own engineer confirms it under oath.

The Receipt

I don't know how the lawsuits play out. I'm not a plaintiff, not a witness, not involved. I'm an engineer in Brazil who was trying to automate a job search and accidentally mapped the internals of a billion-dollar platform's surveillance architecture because my scraper kept breaking and I'm the kind of person who reads the dumps instead of restarting the process.

The report is still on HackerOne. Ticket #3578653. Timestamped February 28, 2026. Five weeks before the lawsuits were filed. If LinkedIn ever wants to read it again, they know where to find it.

Context

The HackerOne disclosure was submitted on February 28, 2026, through LinkedIn's official bug bounty program. Triaged as "Informative" and closed.

The BrowserGate class-action lawsuits (Ganan v. LinkedIn Corporation, Farrell v. LinkedIn) were filed on April 7, 2026, in the U.S. District Court for the Northern District of California.

I have no connection to Fairlinked, the plaintiffs, or any of the law firms involved. The overlap in findings is the result of two independent investigations looking at the same publicly served JavaScript.

h17-webpilot, the automation tool that grew out of this work, is published on npm under Apache 2.0 with no site-specific code.

All testing was performed on my own authenticated premium account. No third-party data was exfiltrated, sold, or disclosed.